Announcing general availability of Amazon Bedrock Knowledge Bases GraphRAG with Amazon Neptune Analytics

Today, Amazon Web Services (AWS) announced the general availability of Amazon Bedrock Knowledge Bases GraphRAG (GraphRAG), a capability in Amazon Bedrock Knowledge Bases that enhances Retrieval-Augmented Generation (RAG) with graph data in Amazon Neptune Analytics. This capability enhances responses from generative AI applications by automatically creating embeddings for semantic search and generating a graph of […]

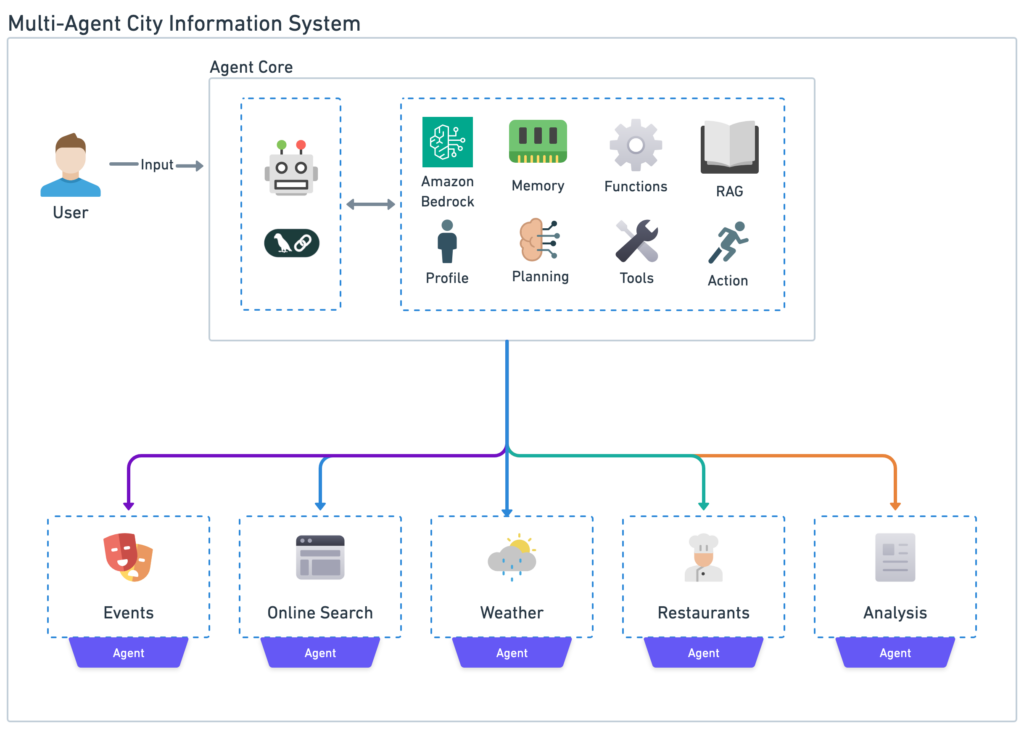

Build a Multi-Agent System with LangGraph and Mistral on AWS

Agents are revolutionizing the landscape of generative AI, serving as the bridge between large language models (LLMs) and real-world applications. These intelligent, autonomous systems are poised to become the cornerstone of AI adoption across industries, heralding a new era of human-AI collaboration and problem-solving. By using the power of LLMs and combining them with specialized […]

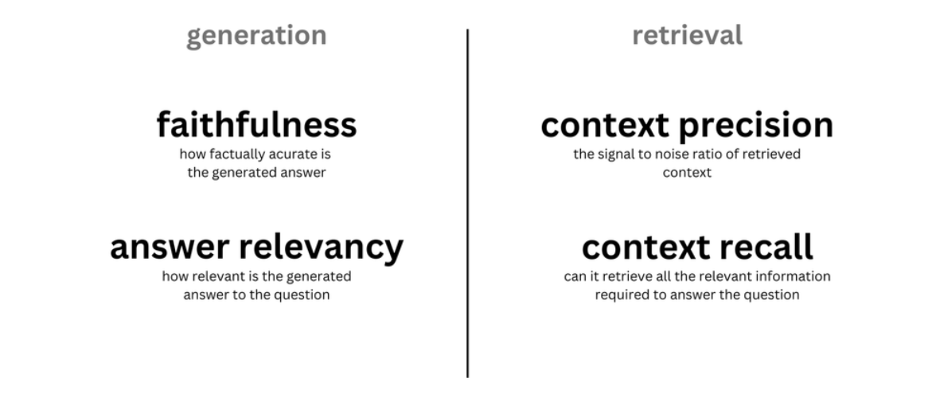

Evaluate RAG responses with Amazon Bedrock, LlamaIndex and RAGAS

In the rapidly evolving landscape of artificial intelligence, Retrieval Augmented Generation (RAG) has emerged as a game-changer, revolutionizing how Foundation Models (FMs) interact with organization-specific data. As businesses increasingly rely on AI-powered solutions, the need for accurate, context-aware, and tailored responses has never been more critical. Enter the powerful trio of Amazon Bedrock, LlamaIndex, and […]

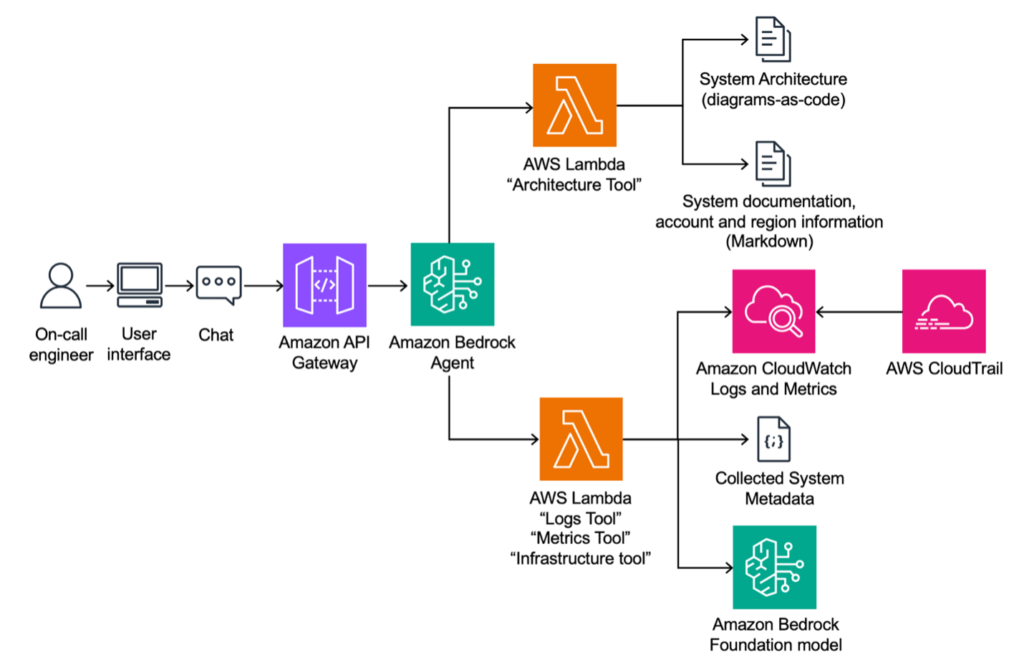

Innovating at speed: BMW’s generative AI solution for cloud incident analysis

This post was co-authored with Johann Wildgruber, Dr. Jens Kohl, Thilo Bindel, and Luisa-Sophie Gloger from BMW Group. The BMW Group—headquartered in Munich, Germany—is a vehicle manufacturer with more than 154,000 employees, and 30 production and assembly facilities worldwide as well as research and development locations across 17 countries. Today, the BMW Group (BMW) is the […]

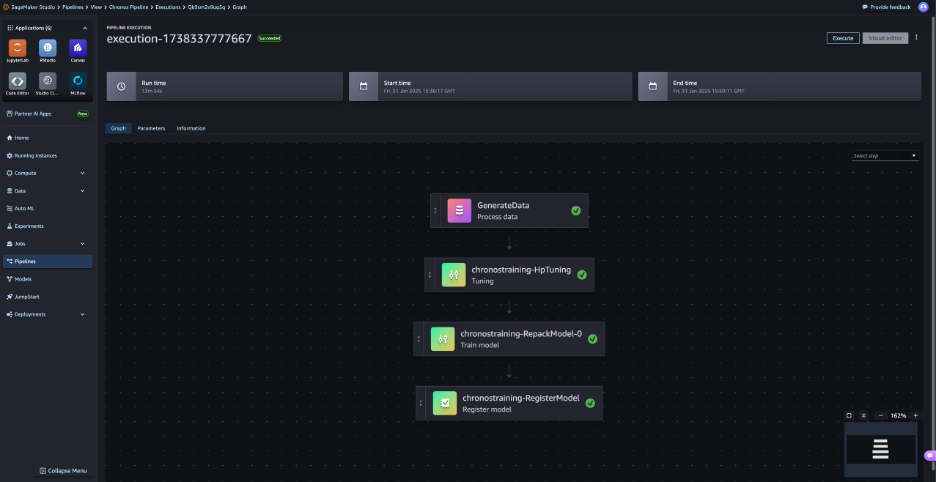

Time series forecasting with LLM-based foundation models and scalable AIOps on AWS

Time series forecasting is critical for decision-making across industries. From predicting traffic flow to sales forecasting, accurate predictions enable organizations to make informed decisions, mitigate risks, and allocate resources efficiently. However, traditional machine learning approaches often require extensive data-specific tuning and model customization, resulting in lengthy and resource-heavy development. Enter Chronos, a cutting-edge family of […]

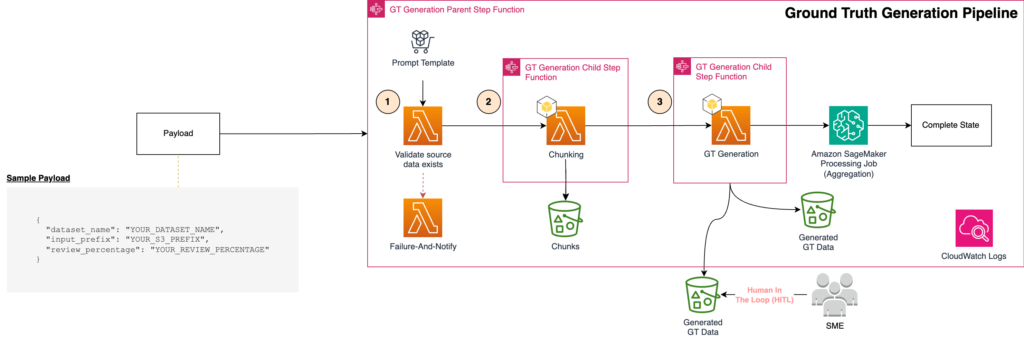

Ground truth generation and review best practices for evaluating generative AI question-answering with FMEval

Generative AI question-answering applications are pushing the boundaries of enterprise productivity. These assistants can be powered by various backend architectures including Retrieval Augmented Generation (RAG), agentic workflows, fine-tuned large language models (LLMs), or a combination of these techniques. However, building and deploying trustworthy AI assistants requires a robust ground truth and evaluation framework. Ground truth […]

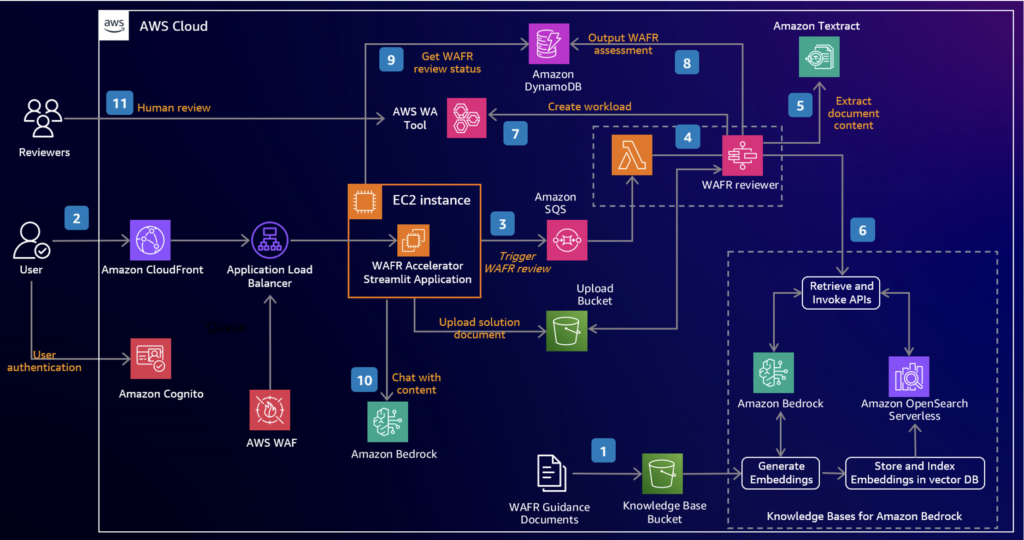

Accelerate AWS Well-Architected reviews with Generative AI

Building cloud infrastructure based on proven best practices promotes security, reliability and cost efficiency. To achieve these goals, the AWS Well-Architected Framework provides comprehensive guidance for building and improving cloud architectures. As systems scale, conducting thorough AWS Well-Architected Framework Reviews (WAFRs) becomes even more crucial, offering deeper insights and strategic value to help organizations optimize […]

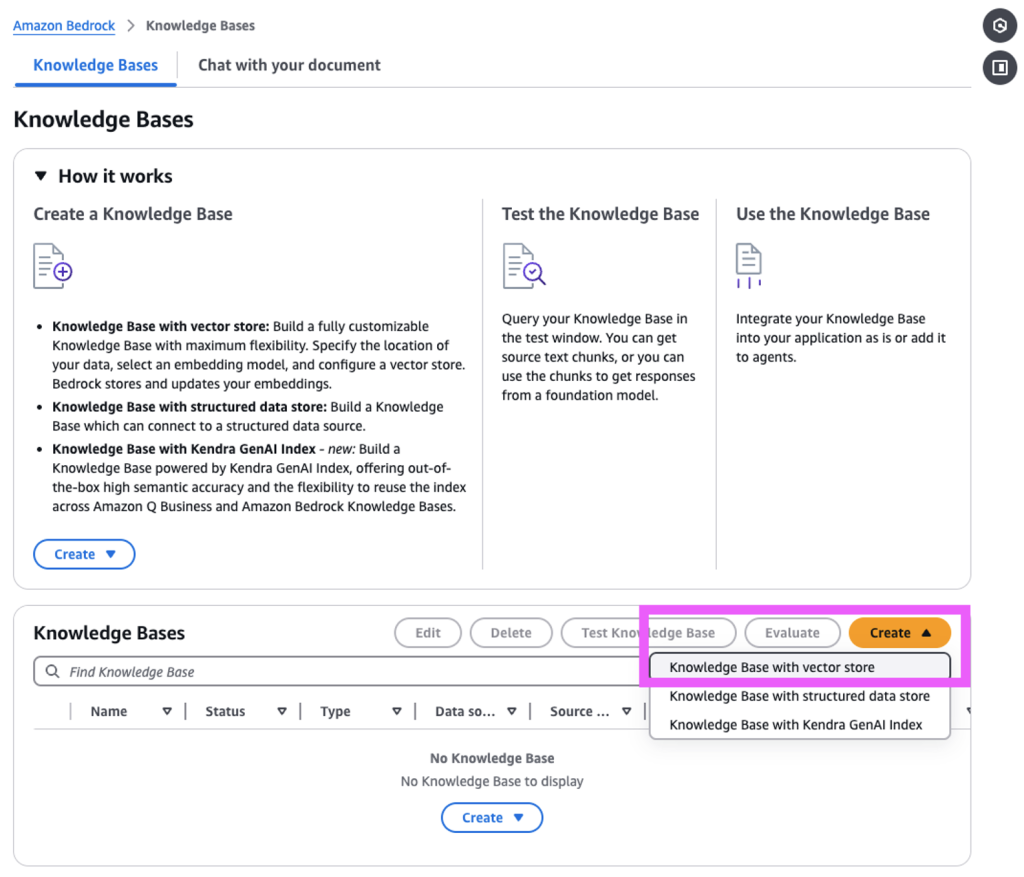

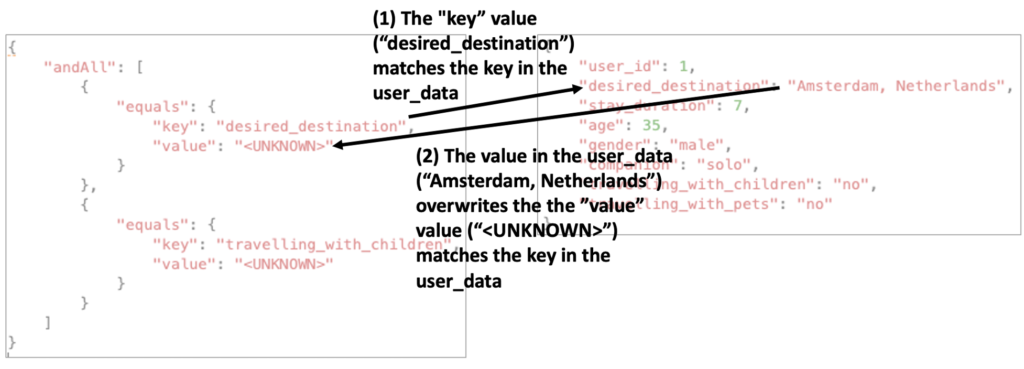

Dynamic metadata filtering for Amazon Bedrock Knowledge Bases with LangChain

Amazon Bedrock Knowledge Bases offers a fully managed Retrieval Augmented Generation (RAG) feature that connects large language models (LLMs) to internal data sources. It’s a cost-effective approach to improving LLM output so it remains relevant, accurate, and useful in various contexts. It also provides developers with greater control over the LLM’s outputs, including the ability […]

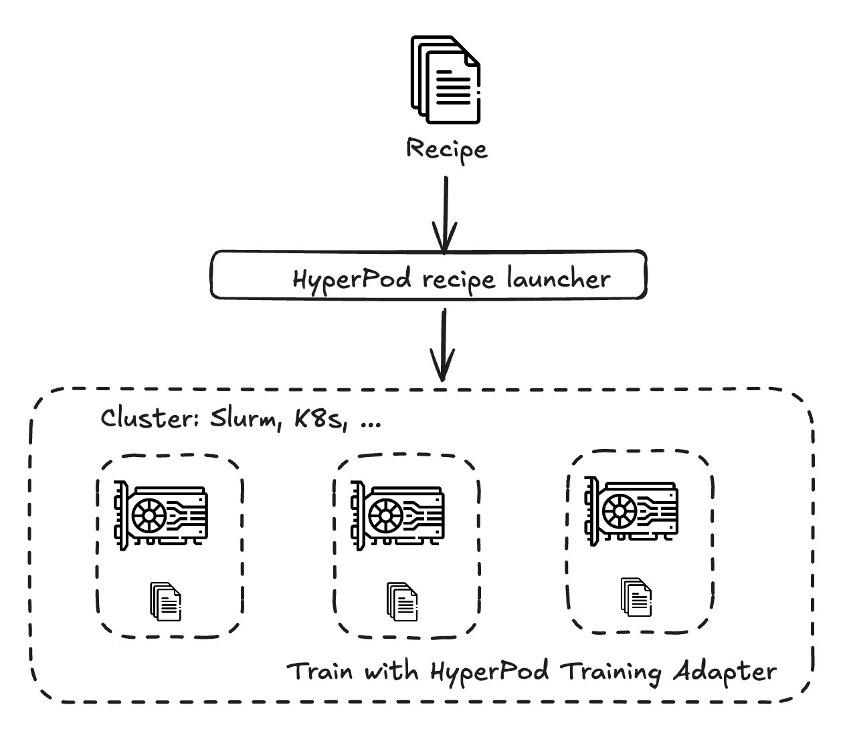

Customize DeepSeek-R1 distilled models using Amazon SageMaker HyperPod recipes – Part 1

Increasingly, organizations across industries are turning to generative AI foundation models (FMs) to enhance their applications. To achieve optimal performance for specific use cases, customers are adopting and adapting these FMs to their unique domain requirements. This need for customization has become even more pronounced with the emergence of new models, such as those released […]

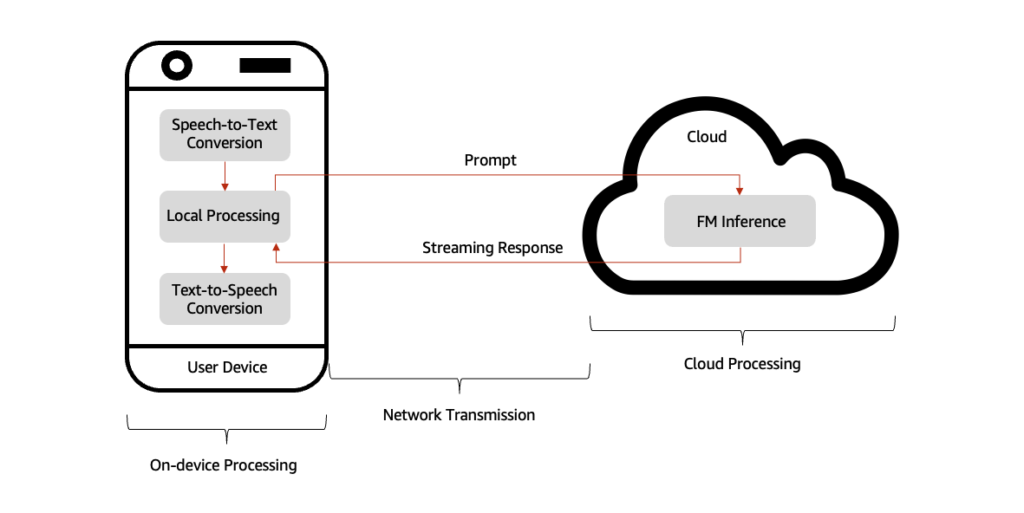

Reduce conversational AI response time through inference at the edge with AWS Local Zones

Recent advances in generative AI have led to the proliferation of new generation of conversational AI assistants powered by foundation models (FMs). These latency-sensitive applications enable real-time text and voice interactions, responding naturally to human conversations. Their applications span a variety of sectors, including customer service, healthcare, education, personal and business productivity, and many others. […]