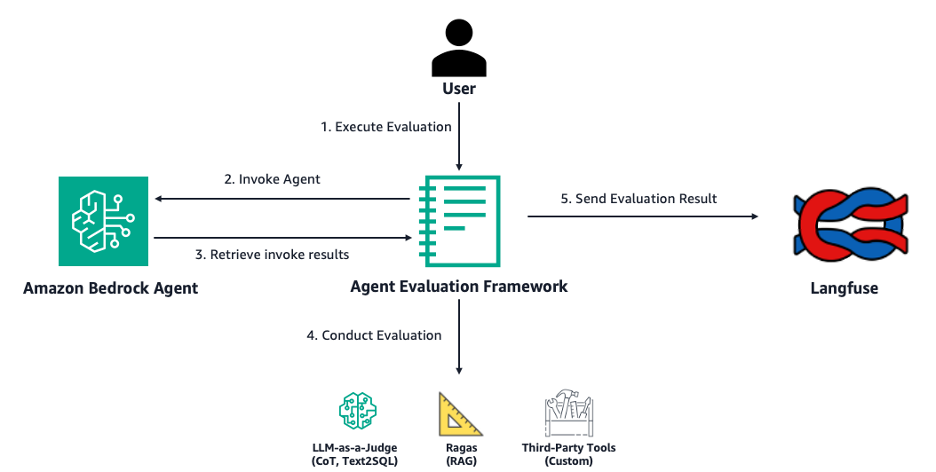

Evaluate Amazon Bedrock Agents with Ragas and LLM-as-a-judge

AI agents are quickly becoming an integral part of customer workflows across industries by automating complex tasks, enhancing decision-making, and streamlining operations. However, the adoption of AI agents in production systems requires scalable evaluation pipelines. Robust agent evaluation enables you to gauge how well an agent is performing certain actions and gain key insights into […]

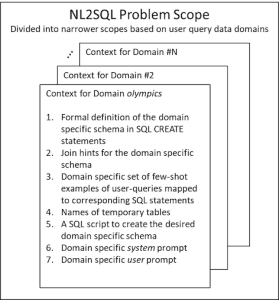

Enterprise-grade natural language to SQL generation using LLMs: Balancing accuracy, latency, and scale

This blog post is co-written with Renuka Kumar and Thomas Matthew from Cisco. Enterprise data by its very nature spans diverse data domains, such as security, finance, product, and HR. Data across these domains is often maintained across disparate data environments (such as Amazon Aurora, Oracle, and Teradata), with each managing hundreds or perhaps thousands […]

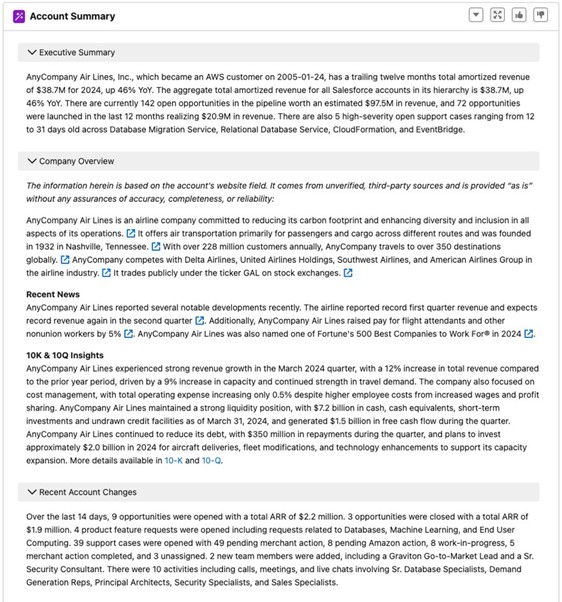

AWS Field Experience reduced cost and delivered low latency and high performance with Amazon Nova Lite foundation model

AWS Field Experience (AFX) empowers Amazon Web Services (AWS) sales teams with generative AI solutions built on Amazon Bedrock, improving how AWS sellers and customers interact. The AFX team uses AI to automate tasks and provide intelligent insights and recommendations, streamlining workflows for both customer-facing roles and internal support functions. Their approach emphasizes operational efficiency […]

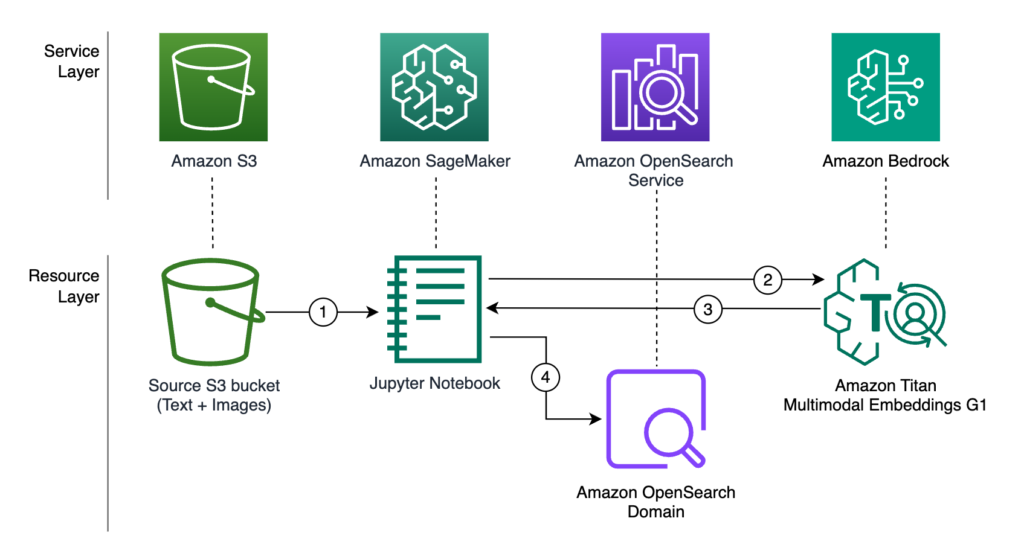

Combine keyword and semantic search for text and images using Amazon Bedrock and Amazon OpenSearch Service

Customers today expect to find products quickly and efficiently through intuitive search functionality. A seamless search journey not only enhances the overall user experience, but also directly impacts key business metrics such as conversion rates, average order value, and customer loyalty. According to a McKinsey study, 78% of consumers are more likely to make repeat […]

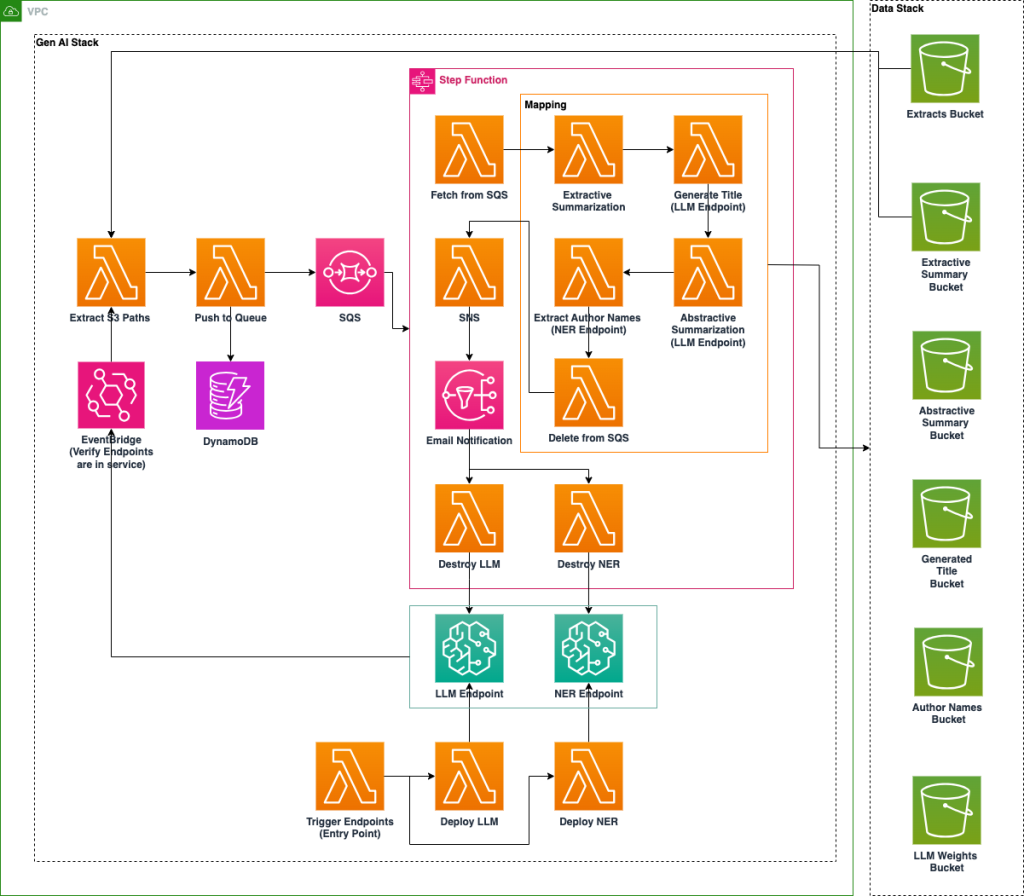

Build an AI-powered document processing platform with open source NER model and LLM on Amazon SageMaker

Archival data in research institutions and national laboratories represents a vast repository of historical knowledge, yet much of it remains inaccessible due to factors like limited metadata and inconsistent labeling. Traditional keyword-based search mechanisms are often insufficient for locating relevant documents efficiently, requiring extensive manual review to extract meaningful insights. To address these challenges, a […]

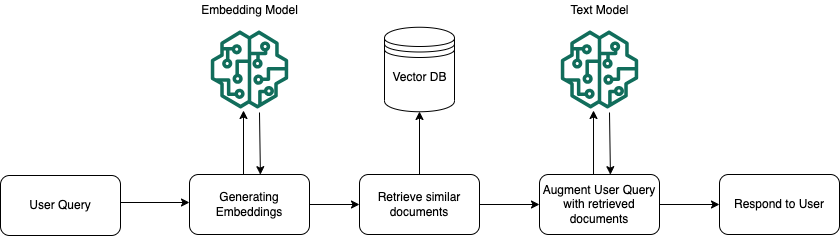

Protect sensitive data in RAG applications with Amazon Bedrock

Retrieval Augmented Generation (RAG) applications have become increasingly popular due to their ability to enhance generative AI tasks with contextually relevant information. Implementing RAG-based applications requires careful attention to security, particularly when handling sensitive data. The protection of personally identifiable information (PII), protected health information (PHI), and confidential business data is crucial because this information […]

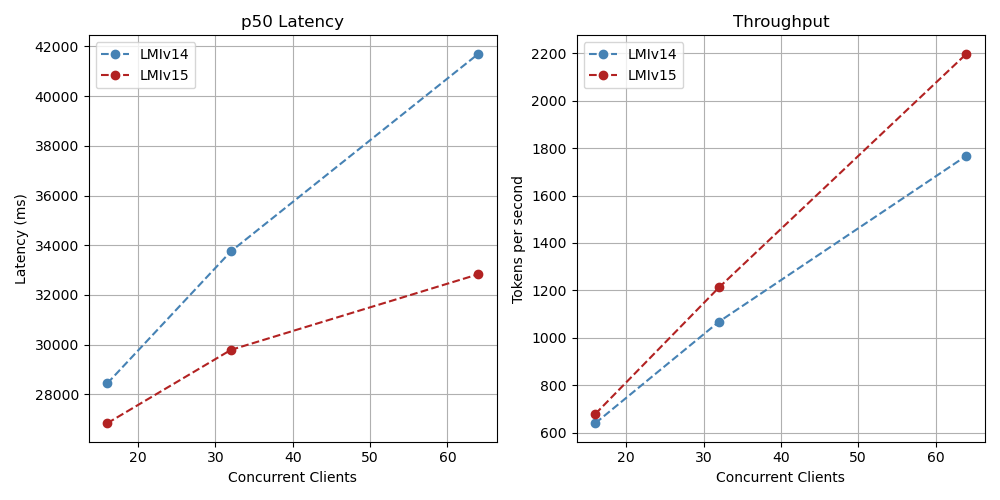

Supercharge your LLM performance with Amazon SageMaker Large Model Inference container v15

Today, we’re excited to announce the launch of Amazon SageMaker Large Model Inference (LMI) container v15, powered by vLLM 0.8.4 with support for the vLLM V1 engine. This version now supports the latest open-source models, such as Meta’s Llama 4 models Scout and Maverick, Google’s Gemma 3, Alibaba’s Qwen, Mistral AI, DeepSeek-R, and many more. […]

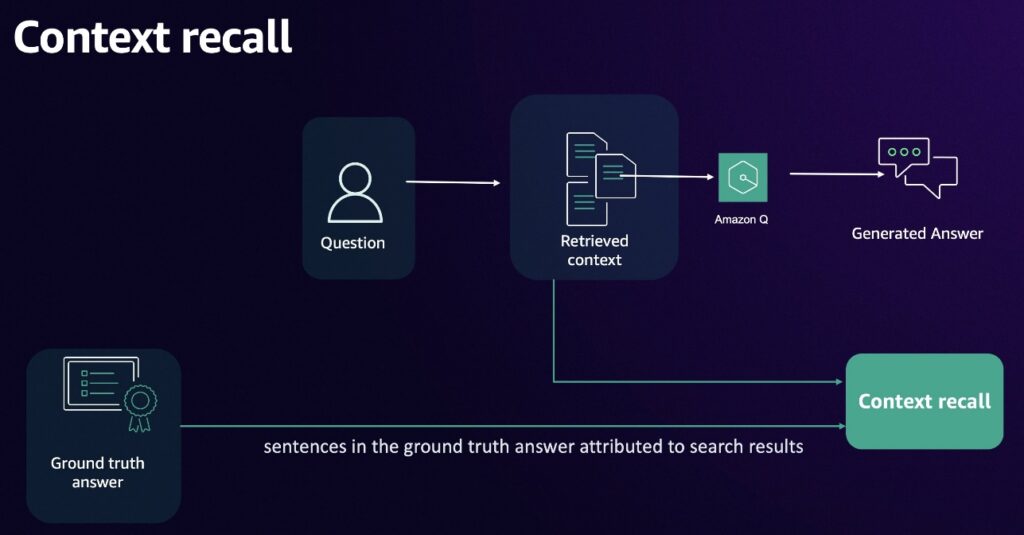

Accuracy evaluation framework for Amazon Q Business – Part 2

In the first post of this series, we introduced a comprehensive evaluation framework for Amazon Q Business, a fully managed Retrieval Augmented Generation (RAG) solution that uses your company’s proprietary data without the complexity of managing large language models (LLMs). The first post focused on selecting appropriate use cases, preparing data, and implementing metrics to […]

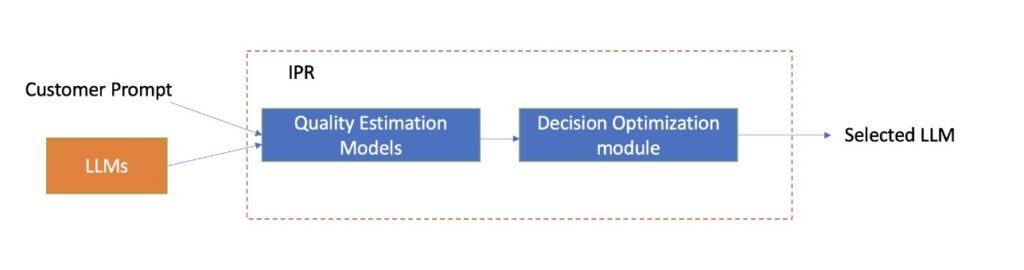

Use Amazon Bedrock Intelligent Prompt Routing for cost and latency benefits

In December, we announced the preview availability for Amazon Bedrock Intelligent Prompt Routing, which provides a single serverless endpoint to efficiently route requests between different foundation models within the same model family. To do this, Amazon Bedrock Intelligent Prompt Routing dynamically predicts the response quality of each model for a request and routes the request to […]

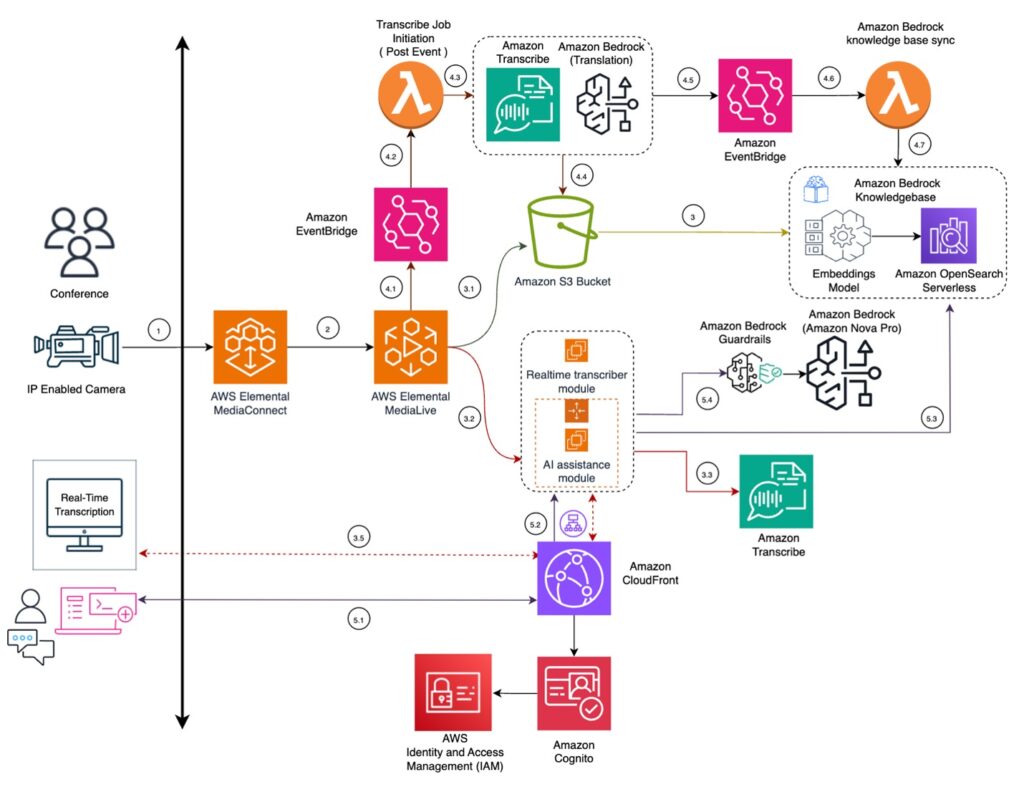

How Infosys improved accessibility for Event Knowledge using Amazon Nova Pro, Amazon Bedrock and Amazon Elemental Media Services

This post is co-written with Saibal Samaddar, Tanushree Halder, and Lokesh Joshi from Infosys Consulting. Critical insights and expertise are concentrated among thought leaders and experts across the globe. Language barriers often hinder the distribution and comprehension of this knowledge during crucial encounters. Workshops, conferences, and training sessions serve as platforms for collaboration and knowledge […]